Editor’s note: This guest post by J. E. R. Staddon is a part of “How to Reform U.S. Science Funding” series.

Tech megastar Peter Thiel, in a recent interview, said that he no longer trusts science. Lesser luminaries have said much the same. The reasons for this distrust are many: fraud (Thiel mentioned a particularly egregious case involving the President of Stanford University), thousands of retracted papers (most justified, some not), massive failure to replicate studies, and weak experimental and analytic methods. Not to mention the bad advice of the medical science establishment over the covid epidemic, and the failure of our leaders to remember Winston Churchill’s maxim: “Scientists should be on tap, but not on top.”

Many fields, especially in the social sciences, have split into multiple subfields with no mutual contact, so truth has in many cases become relative, different for each bubble. Overall, the pace of scientific advance also sems to be slowing.

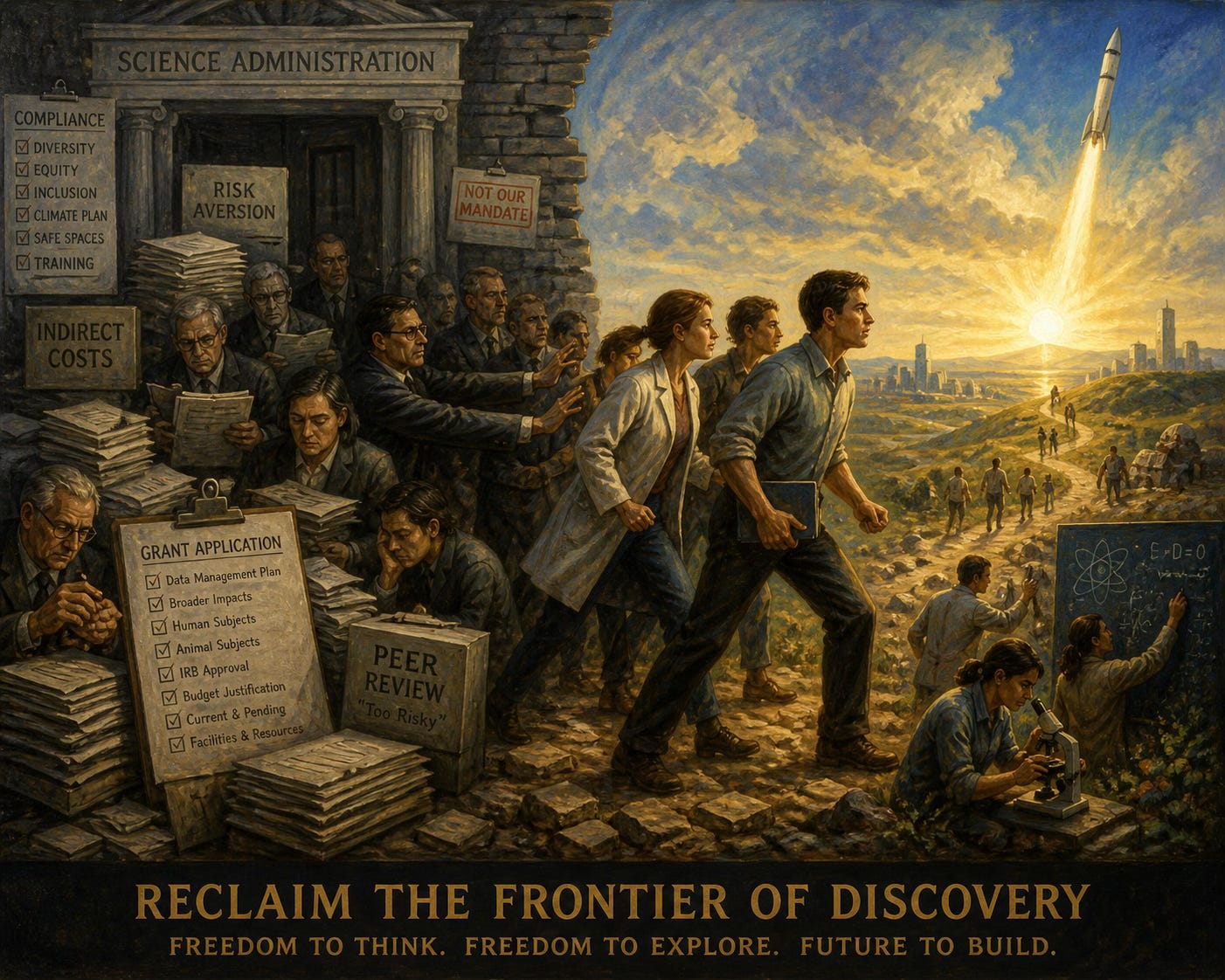

Among the causes of these problems are bad incentives, a system that encourages careerism over love of knowledge, and epistemologically irrelevant race and sex policies (aka DEI). Another problem, however, is how basic science is funded.

Here are a few suggestions on how scientists and their proposals for support might be better evaluated.

Science: Applied and Basic

There are two types of science, applied and basic. The two areas often overlap; nevertheless there is a clear difference between them. Applied science involves projects, a plan to achieve a well-defined objective. The question is given; the project aims to answer it. Much of biomedicine, forensics and materials science provide many examples of projects to solve a medical device problem, discover the cause of a specific phenomenon, cure a particular medical condition or strengthen an artificial fiber. All engineering is applied science.

Once the objective is known, the course of action in such a case will be pretty well laid out. The questions to be asked and the probable answers are well-defined. A lengthy research proposal is justified. The success or failure of applied projects is relatively easy to evaluate: does the engine work? Did the creatively designed new bridge hold up in storm?

Basic science is quite different. Here the aim is to understand nature through novel insights and the discovery new phenomena. The aim is to find the right question rather than solving a known problem. In applied science, the goal and, for the most part, the questions to be asked, are given in advance. But in basic science, the goal is vague and the questions to be asked emerge from the work; they cannot be detailed in advance. Yet now, proposals of both kinds are evaluated by government agencies in exactly the same way.

Most academic science is funded by the National Institutes of Health and the National Science Foundation. These agencies make no real distinction between basic and applied science proposals. All are treated as well-defined projects with a series of anticipated experiments and their probable conclusions, all described in some detail. This process is now incredibly onerous: my first NIH proposal several decades ago was about ten double-spaced pages. Now one hundred+ page, single-spaced proposals are routine.

Evaluating an applied science project is fairly straightforward. The aim is to develop a more efficient jet engine or battery, say. An applied research proposal can usually lay out the steps to be taken and their costs. The proposal may be lengthy, because many details can be specified in advance. The aim is well-defined and judging success or failure is relatively straightforward.

Basic Research

But how do you evaluate a basic science proposal? Preferably by the results: What new phenomena were discovered? How important are they? What new understanding was achieved? But, understanding of what? And what new phenomena, and when? Obviously, these things are themselves uncertain and cannot be specified in advance.

Moreover, most important new discoveries will take many years to arrive, because many false hypotheses must be tested along the way. Most of the major findings of basic science, from Darwin’s theory to the periodic table, have required many years to achieve. Others, like the discovery of radioactivity or penicillin were accidental. Alexander Fleming and Wilhelm Roentgen were smart enough to notice the glowing of a screen and the failure of microbes to grow near a some fungus in a Petri dish.

The relevant variable, beyond the basic qualifications of the researcher and the proposed budget, is the quality of the scientist. I intentionally refer to an individual, not a research team. Creative products whether in art, literature or science, are almost invariably made by an individual or a small group (Watson and Crick were unusual!). A team may well be necessary to confirm a discovery, look for exceptions and so on. But the original finding, achieved either via creative thought or serendipitous perception, is usually due to a thoughtful observer who is usually a single man (yes, again usually a man).

Hence, the creativity, curiosity, perseverance and skills, observational as well as cognitive, of the scientist are in fact the main factor in the success of basic science.

Government science agencies now make no effort whatsoever to assess these qualities as part of the grant proposal process. This was not always the case. NIH at one time did give long-term, even life-time salary as well as research support, to a few people with outstanding track records. These awards supported the individual rather than the project. Government long-term individual support like this has essentially ceased to exist. Now support is limited to five years and usually provides salary only.

This old program had its limitations, of course. A problem is that scientists in many areas are at their most creative when they are young, before they have a chance to get a track record. But there has been no effort to try and assess scientific potential by other means, although much is known about the character and talents of gifted scientists like Richard Feynman, Francis Crick and Barbara McClintock. Perhaps an appropriate psychological profile should be part of a young scientist’s basic research proposal? The proposal itself could be quite short, just a budget and a CV, plus page or two, rather than now when most grant-supported scientists spend as much time writing proposals as doing science.

Competitive Evaluation

It makes sense to evaluate applied science proposals in a directly competitive way. A review committee, specialized in the relevant area can ask questions: How important is the goal of the project? How technically qualified is the applicant? How likely is the proposed approach to succeed? This approach can work for applied science.

Unfortunately, as an approach to basic science it is misguided, because the most important variable is the talent of the investigator. His broad area of inquiry can be evaluated, some are more interesting and potentially important than others, although these judgements are necessarily uncertain: who knew that Roentgen’s glowing screen would lead to X-rays? Who knew that Darwin’s eight-year struggle to understand species of barnacles would give him a better understanding of evolution? The evaluative point is: would the kind of person capable of attending to such apparently irrelevant things have an edge in our current funding system? Probably not since the relevant psychological profile is never created.

So we need a better system for evaluating basic science. It should be both competitive and long term. It should support individuals not projects, but the competition should not be between individuals but between programs. The competition should be between different ways of selecting promising scientists, not between the scientists themselves because we can expect many to fail.

The point is that basic science needs to be evaluated over the long term and depends more on individuals than teams. Basic science cannot be judged project by project, but competition between different approaches to the selection of investigators seems like promising start. Science is an evolutionary process, variation, then selection. The present process, with just one or two agencies funding all research in an area, allows for almost no variation from which to select.

The system needs to be rethought. Unfortunately, NSF far from favoring individual scientists has increasingly emphasized team research which has begun to lead to support for projects that are more political than scientific: for the study of “campus intersectionality” or “increasing diversity” in computing. The point is, the scientific-review process needs to be reexamined. The present system has become sclerotic and political. We need something better.

J. E. R. Staddon

James B. Duke Professor Department of Psychology and Neuroscience. Professor, Department of Biology, Emeritus, Duke University.

Also, respectfully remind everyone about the "Science" governing Covid and the concerted efforts to silence and ostracize any dissent from the Party Line. Shameful episode in the history of scientific merit. Indeed, Biden labeled these dissidents as "disinformation" agents.......

All in all, cogent and indeed exemplary. When scientific research congeals in to "Science"...its a short evolution to orthodoxy, and thus the end of critical thinking and free inquiry. DEI only exacerbates this toxic trend in both selections of topics and researchers. Stalin and Hitler would surely approve of the selection of "scientists" for political advantage. When I see any paper on equity, I cringe....I can expect a diatribe on White Supremacy......